Nov 5, 2022

In 2017 I set up a Mastodon account on mastodon.social to explore that federated micro-blogging experience and basically did nothing with it.

Fast-forward to 2022 and Mastodon is hawt due to billionaire-inflicted decay at twitter and now I get to be a little smug, if I want. I think I deserve that, a bit.

I just migrated to mastodon.ie a local instance for local people, to avoid the incomprehensible firehose that is the dot social local timeline.

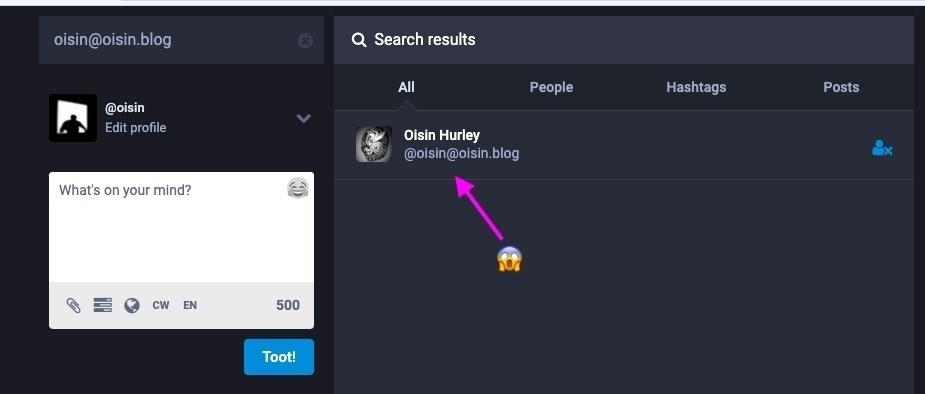

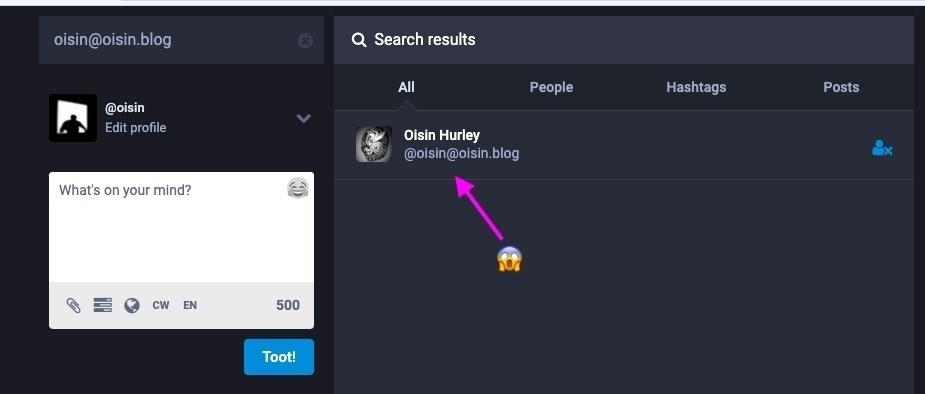

Of course, I forgot entirely that the geniuses at this venue have already built in ActivityPub protocol into micro.blog, and I effectively have a “mastodon account” right here - you can see it from the mastodon web app

I am not sure what to do with this information at this time.

Oct 25, 2021

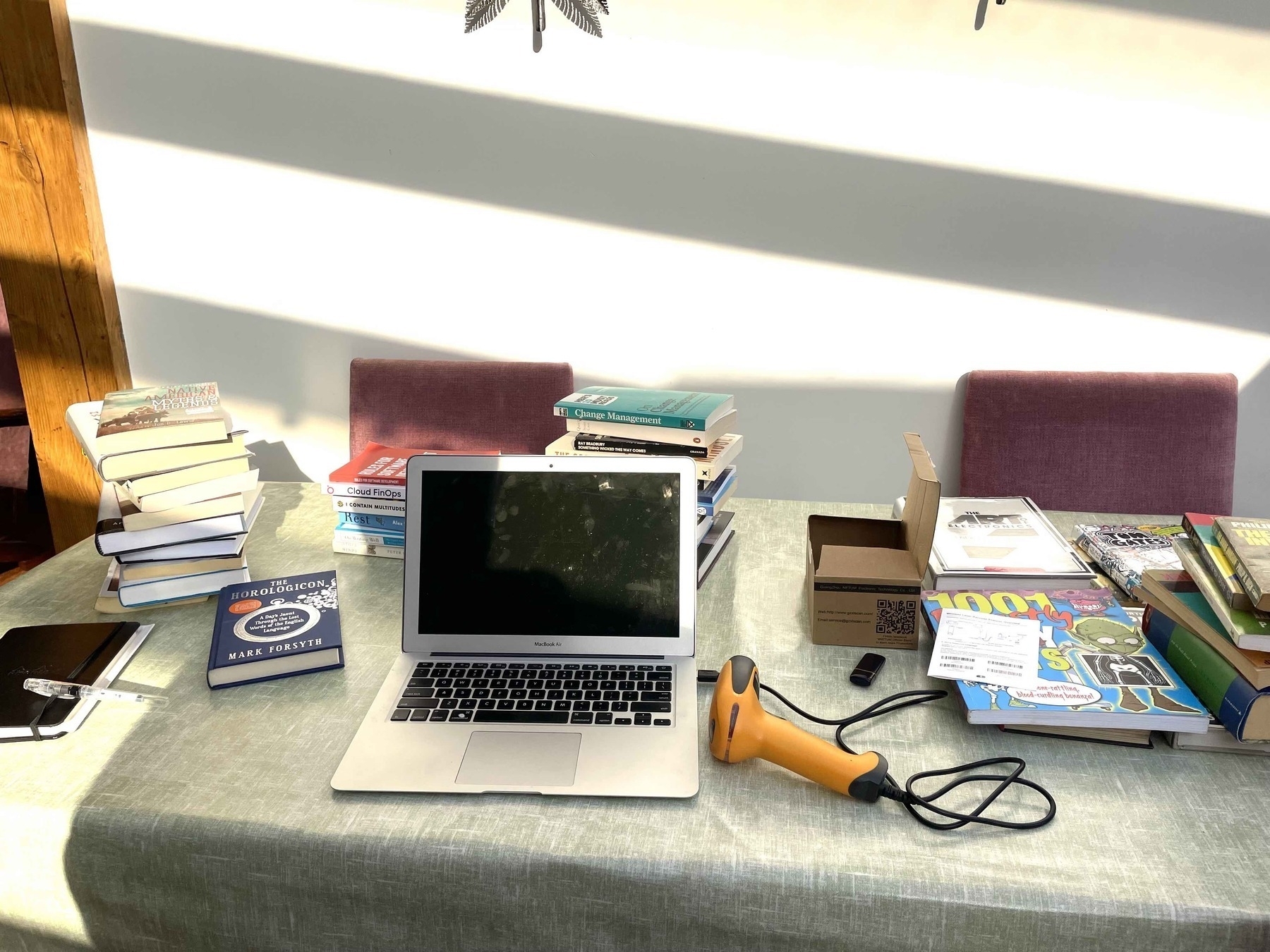

Nearly three years ago I bought a bar code scanner and threatened to catalog all the books on my shelves. That didn’t happen, unfortunately – nothing to do with having a short attention span and an unhealthy attitude toward keeping books unnecessarily, but definitely because non-subscription ISBN-to-Book-Data APIs were not so great at the time.

At the time of writing, I’m midway through a book purge, making space on the burgeoning shelves so that the new, unread books that reside on the floor can go up in the world. This would usually be no issue. I’ll shift out the books I want to hang onto the least to make room. But, during these plague years, transferring books to second-hand stores has become a little more involved. No more dropping into the second-hand section with crate of crinkly, soft-spined paperbacks and enjoying a relaxed chat with the book nerd tasked with assessing the haul. Now an appointment must be made, and the shops are more focussed on their intake, with instructions on what to bring, or not.

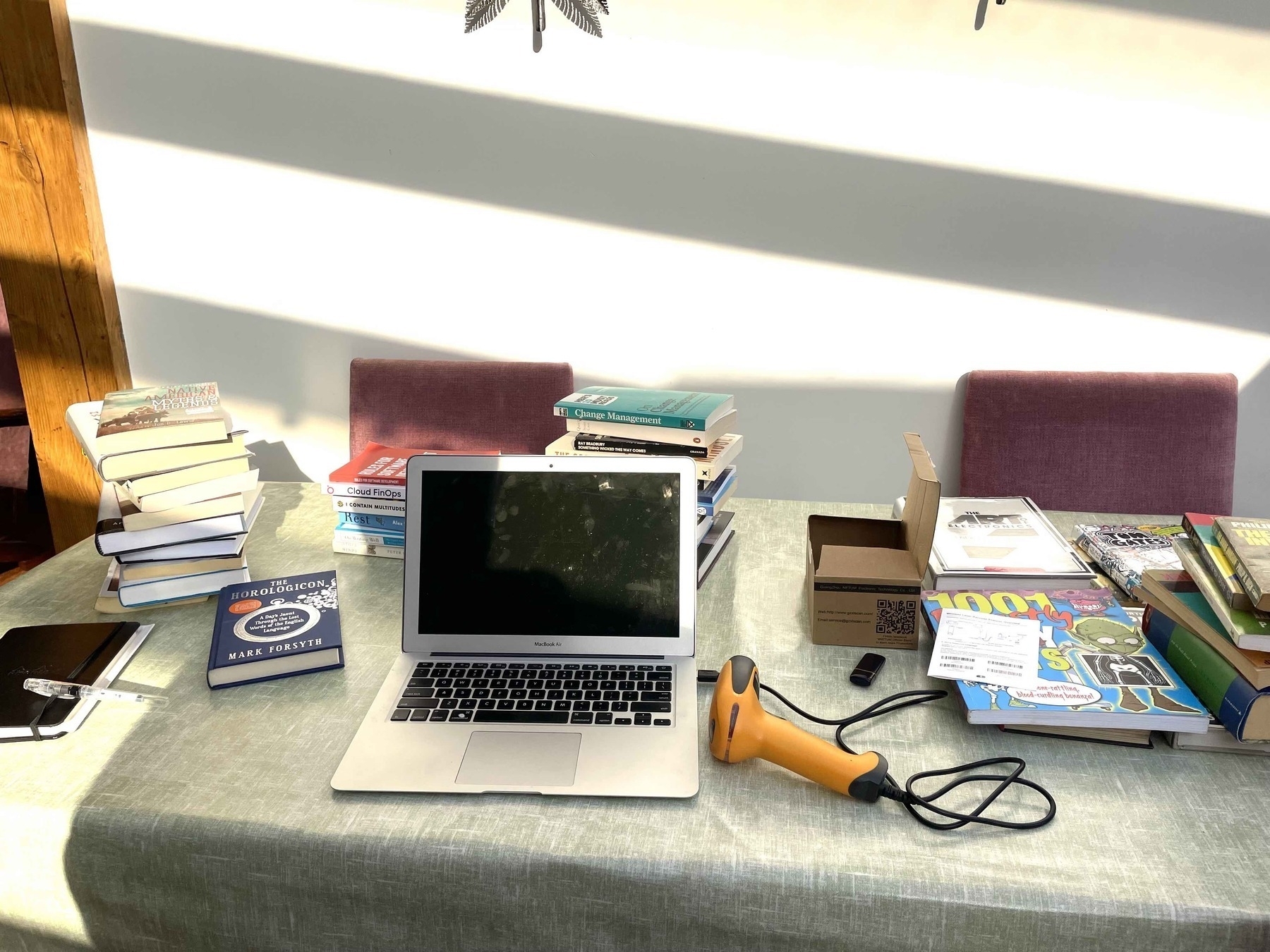

With a stack of forty-odd books to shift, I reckoned the best way to make it easy for the bookshop team was to get the barcode scanner back up and running, bip all the books in the to-go pile, and email the book acquisition team a spreadsheet with enough information to allow them to scratch the books they would not accept.

It also seemed like a good time to record the books I swore I was going to read, and try to focus on reading them before the holidays at the end of the year. Because, I will be asking for more books.

Step 1 - Collect the ISBNs. The barcode scanner does this. Plug it into a computer via a USB cable and it pretends to be a keyboard. Every time you bip, it ‘types’ the ISBN number. This model allows you to bip away like crazy while not connected to a computer, and it will remember the ISBNs (‘inventory mode’). Then you scan a special barcode and it will type all the ISBNs just like you’ve done a big copy-n-paste.

Step 2 - Write some code to query an API with the collected ISBNs and pull the details. This was an issue the last time around, but I found out that the Google Books Volume API is now quite decent for 0- (English, 10 digit) and 1- (English, 13 digit) books. If you pop by book sources at Wikipedia there are more sources, some free, some subscription. The resulting Ruby script lives on Github at isbngrab.

Step 3 - Profit! From the results of labour, that is. There’s an asciicast of the workflow below. The cat command at the start opens the file ISBNs for editing and the bipper types in the numbers. I’m pressing Ctrl-D at the end to close the file, running the script from Step 2 and then showing what is in the output file.

Step 4 - Upload the CSV file to Google Docs, or wherever, and email a public link to the shop so they can check it out.

Oct 20, 2021

Another one from the Archives, this time about having an Open Source related part of your business. This was published in August 2011, drafted a number of years before that, and I’ve left in the exact text, pompous parts and all. When I wrote it, I didn’t mention approaches such as Source Available, even though they were well understood at the time, and if I was to write it again now, I would include Inner Source as a strong step towards Open Source.

This was written as if I was instructing a company, so when you read You in these sentences, it is addressing the person who got the short straw and needs to set up an Open Source Program. They are doing their plans, and attempting to construct a budget.

I don’t maintain comments here, but if you want to pick me up on anything I am @oisin on Twitter.

Backstory: I’m spending some time trying to find 10 spare gigs on my laptop to house the monster Xcode 4 install. During the cleanup, I’ve unearthed several dozen bog bodies from my previous software and employment versions. Some are embarrassing, but they are like eccentric relatives, I cannot bring myself to deny them, plus I enjoy seeing the effect they have on other people. So, rather than throwing them out, I thought I might share them here so that we can all have a laugh.

This one was from when I worked in an Open Source organization embedded in a Closed Source organization – when OSS suddenly became trendy in Enterprise Software. It was a draft of a primer of how to do Open Source When You Have No Idea How It Works, meaning how to take part in projects and run a stable of OSS developers successfully. I don’t think it got beyond a second draft. I still think OSS is a better way to do most things, however it does have its own pathologies, which at least are fairly visible.

Committing to Open Source

So, you want to try out this OSS thing. It’s the new hotness in the press, and your competitors are all about competing only on the 20% and contributing to and using Open Source for 80% of their delivery. You could get fired if you don’t jump on the bandwagon. Measuring your success is crucial. Your old metric of revenue linked to products won’t really work with Open Source. You will need to build a force of highly-competent developers that can consistently deliver value to customers.

OSS is a services engagement in most cases. Customers must be kept happy – you need capable and competent developers and you got to have a plan to keep them coming on-stream. This is vital when you are dealing with potentially the most mobile workforce in the world.

Key Aspects of Open Source (OSS) vs. Secret Source (SSS)

- SSS is about chain-of-command; OSS is about tribal pressures

- SSS is about running a factory; OSS is about being a patron

- SSS is about being regulated and policed; OSS is about self regulation by application of taboo

- SSS success is about moving up the hierarchy; OSS success is about moving out into the world

Patronage in OSS

You are a patron. Think about how that model works – those receiving patronage want to practice their craft. Those giving patronage want to enhance their standing amongst their industry peers. By enhancing their standing the patron can build a reputation and upon that, a dominance.

Those receiving patronage will brook some interference in the execution of their tasks, but not much, so you need to maintain a loosely-coupled connection to the body corporate. This can be difficult. You need someone who has trust from the stable of developers, and also can communicate the corporate messages in a way that makes sense – really this is not about spouting corporate goals, but being able to construct competitive challenges like – “We want to kill XYZ, how do we do it?”, or “That QRPX repository looks good, but we can do better”. Basic, tribal goals, not code-speak for corporates, no mission statements.

OSS developer is a highly mobile position. If you are a good patron, your reputation will spread. You build the reputation for a year or two, then you can start trying to poach names from the other projects that you are up against, poach the bloggers, do this by word-of-mouth. Every single one you persuade will give you PR you can’t manufacture. You are also hitting those projects where it hurts, by taking their staff directly.

How to be a Good Patron

What you do is strengthen each developer’s community immersion. One of the big winners is to increase their physical presence in the OSS milieu. Allow each developer to attend two conferences per year, more if they are speaking. Profile the conferences, and make places available, limit them to encourage submissions. Review your guys’ blogs and suggest topics to them, keep feeding them competitive nuggets. You also need to let them investigate stuff that is new and interesting. Some small-scale and locally-constrained brownian motion is fine, as long as the general trend is moving forward. Make sure you send them to competitors’ conferences, even things like Microsoft conferences. These people are your eyes and ears, developers are mostly honest, and they will give you a level of relevant information you have never had before.

Making Good OSS Developers

Firstly, keep the ones you have. If you are a Good Patron, your attrition should be tiny. However, you need to educate developers to turn them into OSS developers, it is not an instinctive thing. Programs for committership must be established. A mentoring system must be present. Committers must be regularly assessed on their contributions in the areas of Code, Documentation, Bugfixing, Customer Queries, Outreach (blogs/speaking/etc). Developers must have training and committership in more than one project, and may move on to project-centric, or customer engagement-centric majors. Competencies need to be tracked, and gating system put in place for customer engagements, based on using this competency system. This will help keep a strong reputation for technical and product capability in field assignments.

Some of the Roles/Structures You Need

Much of what follows is stating the obvious. There is a strong bias toward the developer satisfaction theme here, since that is the element that is the most different in approach to the SSS method.

- The Project Selection Group – a small group that discusses and decides what projects to be involved in, what to pull out from, what projects to start and promote, etc. Meets quarterly and produces auditable decision trail. Revisit decisions on substantive grounds only after a test period to ensure commitment.

- The Community Manager – one committer in a project has the role of Community Manager. They have a number of responsibilities. One is as the eyes and ears of the project selection group as to the state of the project, community health, diversity, etc. Another is to help to ensure the smooth running of the project and its delivery to users, so community manager role should be strongly biased towards repeatable builds, good website material, strong demos, blogging about the project, outreach at conferences and the like. Also this person is responsible for gauging developer performance in the project (doc, code, marketing, customers). One per project. All project leads and community manager folks must blog about the project and aggregate any blogs that regularly mention it. Everyone needs to read these.

- The Doc Team – if one thing adds value to OSS, it’s the documentation. The Doc Team gets close with the community manager to see where the docs are missing, what needs to be done, etc. An enhancement to documentation on the OSS project website is a big plus to the project, but excellent comprehensive documentation is something that people will pay for.

- The Tools team – another value add is pain reduction. Hard-core developers will have no issues working directly with the OSS project elements as downloaded from the website. The great majority of developers will need tools. A dedicated team is required to produce tools and shortcuts to help customers in certain places to increase productivity. Act on feedback from the services guys, other field reps and the community.

- The Distro team – there’s a group of individuals that are responsible for keeping the distributions ticking over and building – they are responsible for nightlies, weeklies, integration builds and the eventual distribution. They make the distros.

- The Test team – team that builds integration tests that are used as smoke tests for distro quality. They also design the tests.

- Web team – don’t have one of these – outsource it, but make sure the developers can update at any time.

- Marketing Support – primary marketers in the OSS community are the developers – they are the reputation enhancers. However more advertizing-style marketing is required that is full-time. Competition is rife in these communities and it goes to the deepest level. Every now and then stir the pot with some controversy. People can’t help but respond. Don’t be destructive – keep the bridges uncharred.

- Administrative Support – office management, expenses, travel arrangements, etc are all things that developers are not so good at.

- Training/Services – competency-based tracking of developers employed to stream capabilities to customers. It is important that every developer takes some time every year to be in front of a customer.

- Legal – maximally important when engaging with enterprise customers. Consider paralegel education for developers, or at least support them where feasible. Employ lawyers who have provenance in this area.

Dealing with the Mud-Slinging

When operating (‘competing’) in the OSS arena, attacks from others can be directed towards the software, or to the organization. Challenges to the software are easily met – if they are untrue, show that is the case; if they are true, then address the issue, and make a show that you have addressed it and that your detractor will have to think of something else to complain about. Attacks on the organization are generally harder to grasp.

The top four muddy missiles are usually the following (where for X insert company name):

- X has not acted in the best interest of the community

- X has been divisive in the community

- X has been spreading FUD (fear, uncertainty, doubt)

- Our software has displaced X in every account we went into

These kinds of accusations are reputation-tarnishers and need to be addressed. Accusers need to explain their assertion, and it needs to be dealt with in the open. They will need to cite examples for any challenge. Be sure to track every single engagement with them at the customer level. Build competencies in competitor product so that you can keep ahead – delegate a team to track and build small systems with the competitor product. Competition needs to be strong and you need to be up to date all the time, and be ready with insight or analysis, indeed produce these regularly.

If you come across failures in competitive products in real-world scenarios, you need to record them and save them for deployment when necessary – for example when you get attacked or when the opponent is in a weak position. Keep references to your own successes.

Jul 4, 2020

Another recipe! When I get asked for recipes I write them up here so that I can remember them too.

Some day I will get back on the topic of 3d printing &c, but first:

Khichdi is a dish of rice and and daal that is consumed all over India. This one is specifically Gujarati. I think that this kind of dish is probably the basis for that thing called Kedgeree, which has flaked fish in it and is sometimes served as a breakfast dish in the UK and other places.

This is basic, but there’s no reason at all that you could chop extra vegetables in with it to make it a full meal. The recipe uses ghee, but if you use the vegetable ghee then this will be entirely vegan.

This recipe uses “cups”, but consider this a relative measurement! I will make some of this and weight it out properly at some point.

- 1.5 cups rice

- 1 cup toor daal

- 1.5 tsp salt

- 0.5 tsp turmeric powder

- 2 tbsp ghee

- 1 tsp mustard seeds

- 1 tsp cumin seeds

- 1 dry red chilli

- 8 whole black peppercorns

- 5 cloves

- 1 stick cinnamon

- 5 bay leaves

- 4 or 5 cups of water

The toor daal, also know as pigeon pea, tur, tuvar and gungo peas is a yellow lentil, and with roti or rice is a staple in India.

Wash the daal and the rice separately, and set them soaking, separately again, for 30 mins. The water should just cover the rice/daal. You might know the score here already – if there are any discoloured lentils or ones with the hulls still on, take them out. Wash the rice until you get a cleanish rinse.

Heat the ghee in a big coverable pot and when hot hot put in the mustard seeds, cumin seeds, dry red chilli, cloves, cinammon and peppercords. You are making a fragrant oil here that will coat everything. These should sizzle when they hit the oil – give them 5 seconds and then throw in the soaking daal, including the water! It will be a noisy business. Stir around, put the cover on the pot.

After about 10 minutes, add the rice and its soaking water, the salt and the turmeric powder. Bring the mixture to a boil for a minute or two, then reduce the heat to low, cover the pot and cook on low for 15 minutes. Take it off the heat, and set it aside, undisturbed for another 15 minutes. Don’t mess with it! Let it settle. FINALLY then you can mix it all up and start eating it.

It’s very delicious. You can put chutneys or anything through it, or hot sauce, etc. It’s great to go with a bit of veg curry.

Jun 9, 2020

This recipe comes from a Punjabi woman living in Dublin, Ireland. In her household, naan bread was a real treat, something that only appeared when there were guests over, or there was some kind of a celebration. Otherwise it was roti and chappati all the way. She called the white flour used to make this “cake flour” – so not a high-protein strong flour, but the material that is mostly called Plain Flour in the shops.

This recipe uses “cups”, but consider this a relative measurement! I will make some of this and weight it out properly at some point.

- 3 cups plain flour

- 0.5 cup milk

- 0.5 cup yoghurt

- 2 tsp baking powder or yeast

- 2 tsp butter or ghee

- 1.5 tsp salt

- 1.5 tsp sugar

- 1 tsp kalonji seeds

Ok we need a little digression here on the topic of kalonji – it’s a plant called Nigella sativa and it’s in the Ranunculaceae family, distantly related to the good old buttercup that decorates our gardens and fields. You will find it in shops as Kalonji, or as Onion Seeds. It’s nothing to do with onions.

Sieve the flour in one big bowl, put a hole in the middle and then put in the baking powder (yeast), milk, yoghurt. Take a beer out of the fridge, open it and pour into the appropriate glass. Back to the bowl – add butter (ghee), sugar, kalonji seeds and salt. Wash your hands. Mix with your fingertips to make a dough. If it feels a bit dry, use more milk. Butter up your hands and knead it a little, then cover and leave in a warm place for 6-8 hours.

later…

Divide the dough into 10-15 balls. Roll them out into an oval shape and put the naan under a medium heated grill (for the bubbles) then cook the other side on a pan. Or cook it all on the pan, or a tandoor if you have one, whatever works, like. Hotter the better.

You can add an egg to soften the naan – restaurants tend to do this. If you want to eat the leftover naan (if any) later on, then using ghee is best for the dough.

Sep 2, 2019

Last weekend, I got an invite to visit a new Bee Sanctuary with a group on wildlife enthusiasts. Being organized by one of the group who has become healthily obsessed by bees in Ireland, the focus of the visit was to inspect vital pollinators, but there was a lot more to see.

After years of work, Clare-Louise and her husband and family are sitting in a wonderful 55-acre area of countryside with two lakes, a short walk from 8 acres of sunflowers and rows of nectar-laden native and naturalized flowering plants. Areas that have been allowed to re-wild see the appearance of wildlife you wouldn’t hear about normally - leaf-cutter bees drilling and hiding in a bench by the lake, moss carder bees - and the vertebrates are getting a look-in with otters, badgers, deer, herons, duck, rudd and others making appearances.

The Bee Sanctuary is at the end of a lane with a grassy mohawk, a first-gear experience for those with sensitive suspensions and kidneys, and is well worth a visit for anyone who enjoys the surrounds of nature.

Despite the windy day, I packed the macro lens and managed to catch some of our intervertebrate buddies in action (warning, potential insect nookie).

Put this spot on your list for walks with the kids, with the camera, or just on your own when the weather is good!

Mar 27, 2019

History became legend. Legend became myth. And for one hundred and sixteen months, this following blog post passed out of all knowledge.

However it turns out Google did actually remember and I found my old blog again, and with it, a post that I reproduce in mostly its entirety here.

Warning before you begin: this post mentions Uncle Bob, who has become a bit of problematic figure around these parts. He did give a very good talk at that EclipseCon show in 2010, though.

Oisín’s Precepts Version 1.0.qualifier

May 2010

I’m making a set of precepts that I’m going to try and stick to from a professional engagements perspective. These have been very much influenced by Uncle Bob – especially his keynote for EclipseCon 2010, which provided the inspiration to put these together in this form – and of course by the many mistakes I’ve made in the past, which is what we call experience. So, in no particular order:

Don’t be in so deep you can’t see reality.

If you haven’t communicated with a user of your software in over a month, you could have departed the Earth for Epsilon Eridani and you wouldn’t know.

Seek to destroy hope, the project-killer.

When you hear yourself saying well, I hope we’ll be done by the end of the week, then you are officially on the way to that state known as doomed. If you are invoking hope, trouble is not far away. So, endeavour to destroy hope at every turn. You do this with data. Know where you are – use an agile style of process to collect data points. Iterate in fine swerves that give you early notice of rocks in the development stream.

When the meeting is boring, leave.

Be constructive about it, however. You should know what you want to get out of the meeting. If it’s moving away from what you are expecting, contribute to getting it back on track. It won’t always go totally your way. If you can’t retrieve it, then make your excuse and leave.

Don’t accept dumb restrictions on your development process.

Pick your own example here. Note that restrictions can also take the form of a big shouty man roaring the effing developers don’t have effing time to write effing tests! (true story that).

There must be a Plan and you must Believe It Will Work.

This is pretty simple on the face of it. One theory on human motivation includes three demands – autonomy, mastery, purpose – that all need to be satisfied to a certain degree before one is effectively motivated (see Dan Pink’s TED lecture). If there is no plan, or the plan stinks like a week-old haddock, then the purpose element of your motivation is going to be missing. Would like to earn lots of money, work with fantastic technologies and yet have your work burnt in front of your eyes at the end of the month? I wouldn’t.

Discussions can involve some heated exchanges. That’s ok, but only now and then.

Without extensive practice, humans find it difficult to separate their emotions from discussions, especially when there is something potentially big at stake. Just look at the level of fear-mongering that politicians come out with to influence voters. There will be some shouting – expect it – but it’s not right if shouting is a regular occurrence.

Refuse to commit to miracles.

How many times have I done this already over the last eighteen^h^h^h^h^h^h^h^h twenty-seven years? Ugh.

Do not harm the software, or allow it to come to harm through inaction.

No making a mess – your parents taught you that. Stick with your disciplines. Don’t let any one else beat up on the software either. It’s your software too, and hence your problem if it is abused.

Neither perpetrate intellectual violence, nor allow it to be perpetrated upon you.

Intellectual violence is a project management antipattern, whereby someone who understands a theory, or a buzzword, or a technology uses this knowledge to intimidate others that do not know it. Basically, it’s used to shut people up during a meeting, preying on their reluctance to show ignorance in a particular area (this reluctance can be very strong in techie folks). Check out number nineteen in Things to Say When You’re Losing a Technical Argument. Stand up to this kind of treatment. Ask for the perpetrator to explain his concern to everyone in the room.

Learn how you learn.

I know that if I am learning new technologies, I can do it best provided I have time to sleep and time to exercise. I also know that my learning graph is a little like a step function, with exponential-style curves leading to plateaus. I know when I am working through problems and my brain suddenly tells me to go and get another coffee, or switch to some other task, or go and chat to someone, it means I am very close to hitting a new understanding plateau. So I have to sit there and not give in 🙂 I also know that I need to play with tiny solutions to help me too.

You have limits on overtime, know them.

This should be easy for you – if you are tired, you are broken. Don’t be broken and work on your code. Go somewhere and rest. Insist on it.

Needless to say at some point in the future this post will come back to haunt me I am sure. But I’m hoping that if I produce a little laminated card with these precepts on it, keep it in my wallet, then I’ll at least not lose track by accident.

Narrator’s voice: He lost track by accident

Mar 7, 2019

This is a two-pot stovetop affair. Veg and gravy are cooked separately and then combined.

Tempering – creating a flavoured oil for cooking in

- veg oil

- 1tsp cumin seeds

- 2 small dried red chillies

- 4 cloves

- 1 cinnamon stick

Vegetables

- 100 grams green beans chopped in large pieces

- 2 large carrots

- 3 small potatoes

- 2 cup peas

- 2 small peppers, sliced

- 1 small cauliflower

- salt

- 0.5tsp turmeric

- 450ml water

Put some oil into pot and wait till it gets hot, then throw in the tempering spices and let them sizzle for about 5-10 seconds, then lash in the vegetables, carrots first, then potatoes, roll them around and get them covered in the flavoured oil, follow up a minute later with the rest of the veg items.

Turmeric, salt and water goes in next, and stir it up, cover it and let it cook, keep an eye on it so that veg doesn’t stick. Goal here is to have cooked veg in this pot, into which the gravy gets added.

In a smaller pot, it’s gravy.

Tempering

- veg oil

- 1tsp mustard seeds

- 0.5tsp cumin seeds

- 1 small dried red chilli

Gravy

- 2 medium onions, chopped

- 2 cloves garlic, squooshed

- 1tbsp grated ginger

- can of chopped tomatoes

- 2tbsp tomato purée

- 1tsp cumin powder

- 2tsp coriander powder

- 1tsp garam masala powder

- 1 fistful of unsalted cashew nuts, ground up and made into a thick paste with some water

You are going to put this through a blender to smooth it out, so the onions don’t need to be chopped super tiny. If you are not blending, then chop them super tiny!

Put some oil into pot and wait till it gets hot, then throw in the tempering spices and let them sizzle for about 5-10 seconds, then in with the onions, garlic, ginger and cook till onions are browning up. Add the can o tomatoes, purée and all the spices, stir it up and cook on low for another 10 min. Blend it all for a smooth gravy, then stir on the cashew nut paste.

Mix the gravy into the pot of veg and bate it inta ya.

Note that there is no shame in drinking the gravy out of a mug on your own in in the kitchen.

Jan 5, 2019

Procrastination-breaking is the order of the month here at Lab 47b as I finally managed to finish up the first part of my embedded projects development mise en place: the computery and development environment bit.

With 2x elderly and half-bollixed laptops (MacBook1,1 / MacBookPro2,2) and an elderly and 1x half-bollixed Mac Mini (MacMini2,1) to hand I crafted 2x gutted elderly laptops for the recycle pile and 1x mac mini with an upgraded 2GB of RAM and an upgraded 120GB SSD, running Lubuntu 18.04.

The SSD and the memory both came from the MacBook1,1 and worked flawlessly. The slightly faster memory in the MacBookPro2,1 gave the Mini indigestion such that it could only recognise one of the 2GB.

A top tip from @denishennessy got me to ditch the Arduino IDE and instead download the PlatformIO IDE, which extends Microsoft’s Visual Studio Code. This runs fine on the (relatively) low end compute of the Mac Mini.

Well! That’s enough success for one week. Now I shall briefly retire to inventory my unused microprocessor heap.

Jan 2, 2019

Welcome to 2019 – an opportunity to change the way things happen compared to the year gone by. Have you made any resolutions or goals for the new year? You have? Well keep them to yourself. Not that we aren’t interested, but because this could very well lead to you duffing it entirely. And, while aspirational goals are great for OKRs, this time you might actually want to get there, so think about setting implementation goals instead.

I’m not going to say anything about my own resolutions, however, in an unrelated event I actually finished a mini project. It’s a Naturewatch camera kit that appeared in my Christmas stocking. It goes into real-world deployment tomorrow.

Instructions to make are all available at Naturewatch. If you’ve handled any Pi / Arduino projects before it’s super simple to construct, and even if you have not, the instructions are presented really well.

Update – here’s some of the results!

Nov 20, 2018

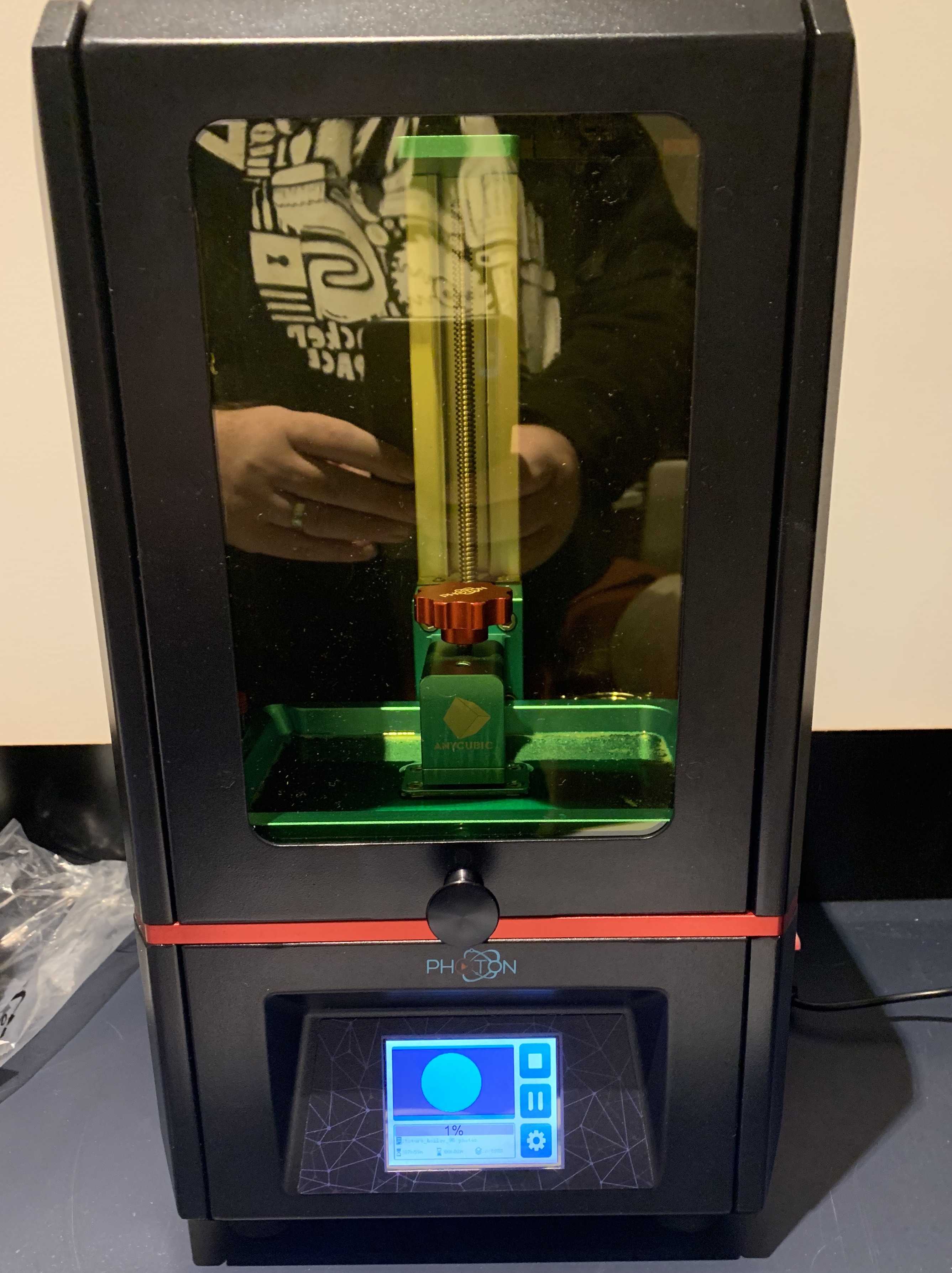

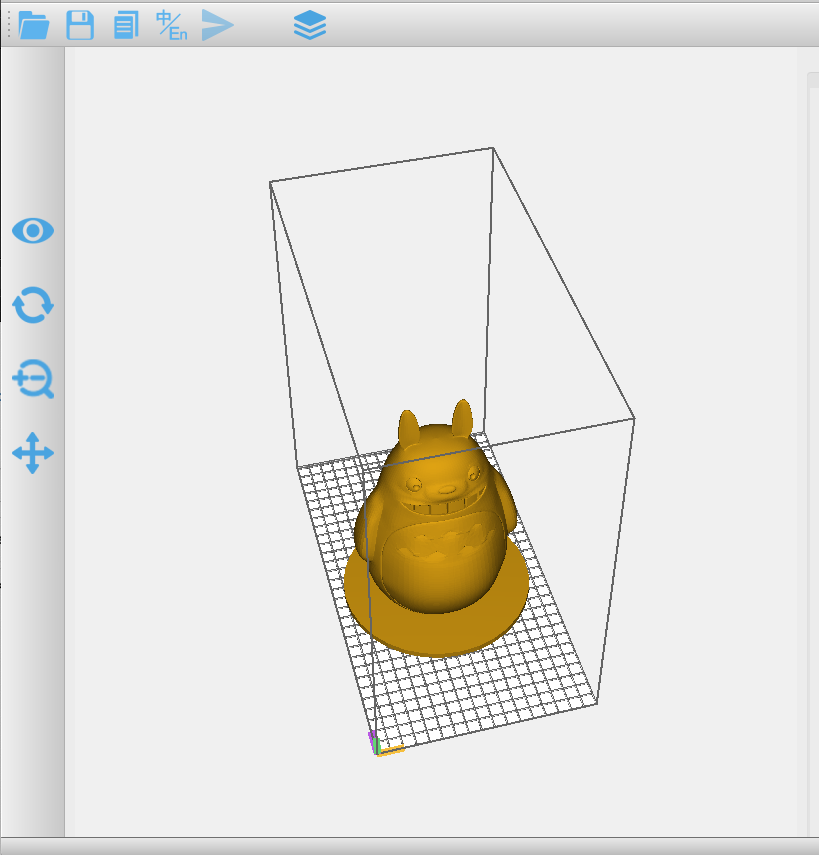

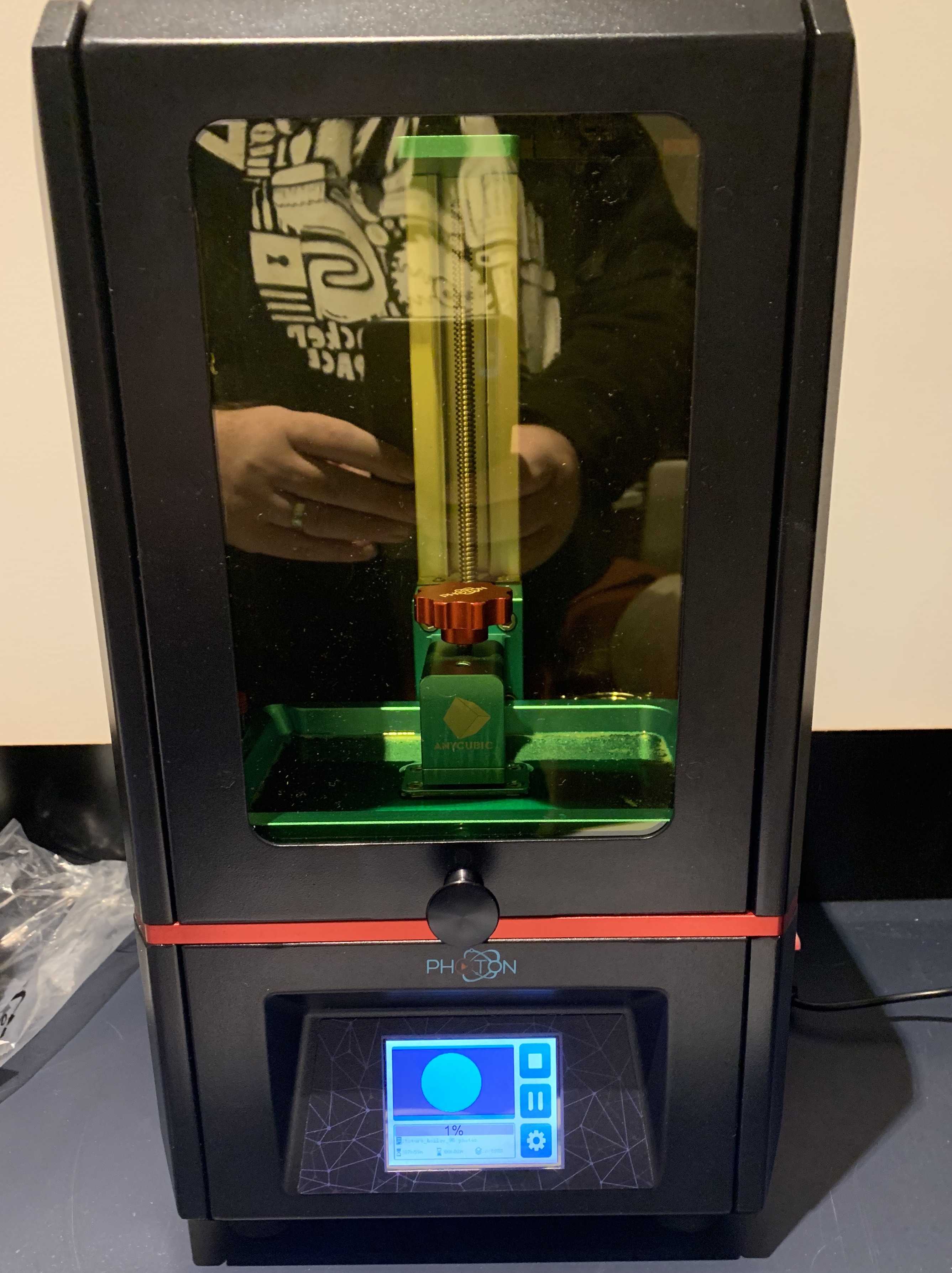

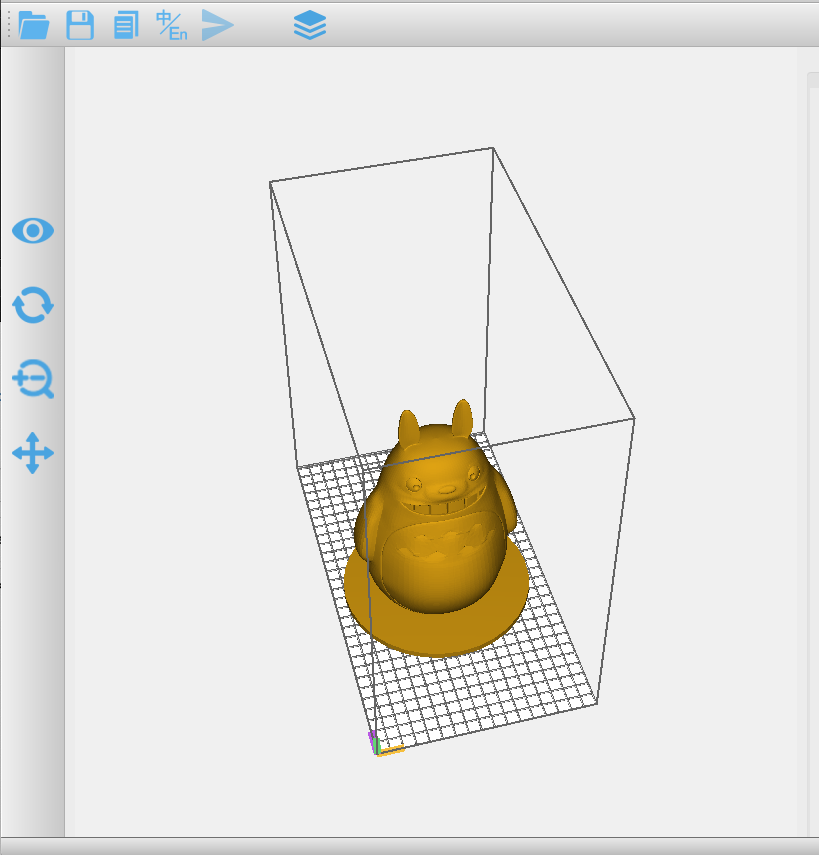

Great excitement in 47b this evening as a new member of the family arrives, in the shape of an Anycubic Photon 3D Printer. This is a resin printer, which should be considerable smoother on a print than the PLA machine that we have in work (we make software – totally unrelated).

First print has kicked off, which hopefully will result in a translucent green Totoro in about 6 hours time. Or, indeed some intolerable resiny mess all over the place and disappointment for all. With the PLA printer, I think it took 4 prints to get all the correctly levelling, pre-heating and such jiggerypokery practiced enough to ensure regular successful prints.

Next up – go to bed and set the alarm for 4am to check this fella, or stay here and be overcome by resin fumes before midnight.

Nov 16, 2018

Several years ago I catalogued all my paper books using the barcode scanning capabilities of the Goodreads app and it was a task. I wanted a handheld zapparoony thing so I could just bip my way through the piles and then upload, recognize and store titles, ISBNs, etc. No reviews or sharing, just fast maintenance of my own library of 500 or so books.

“To the internet!”, I cried, only to have my hopes dashed by the pricetags on the zapparoony things that were nudging up over the $200 mark. Far too much, so the project was abandoned.

Until now.

Thanks to the fabulous trove that is Ali Express, I picked this little yella fella up for the princely sum of $24. It’s 433Mhz wireless USB, is rechargeable, has an inventory mode wherein you can bip all day and then upload all the bippage at your leisure. And, the thing identifies as a keyboard! It basically writes to STDIN. In the photo there you will see an emacs window on the right with just numbers in it, these are the data from the scanner.

Next steps – automation. After a interlude of heavy bipping, I’ll need to upload all the numbers to somewhere, and have an automated process do the lookups to flesh out the details of the books. On the local machine I’ll write a wee app to do the grabbing of the STDIN and the upload to wherever the storage will occur.

All of this will happen in my copious spare time.

Sep 14, 2013

Another install-related entry. This time, I’m attempting to install the Ruby Gem, DataMapper, for a small project - a mini app for pulling S3/Cloudfront download stats for my Verbose (iTunes link) podcast. The app pulls a date range worth of logs from S3, then digests the logs, putting the relevant data into a Postgres database, so they can be mangled at will later.

I’ve installed Postgres.app on my MacBook Air, because I like the icon. I could have homebrew‘ed the database in, but since I don’t use it that often, it’s trivial to remove when bundled into the app. DataMapper has worked well for me in the past, so I’m sticking with it.

The whole reasoning behind using Postgres in the first place is that both AppFog and Heroku support it as their default SQL database. There’s no performance constraints, or scaling requirements here, since this is just a tiny, personal app. The main technology consideration is get-out-of-my-way-and-let-me-get-this-done-for-cheap.

But there’s always something. In this case it’s installing a Ruby gem with native extensions, when the collateral for building those extensions resides in a non-default location.

Making the install work involves using bundle config, an aspect of Bundler I didn’t know about. It allows you to override the arguments to the configure execution for native gem components. You can directly override when installing a gem, or you can register an override that will be stored in your ~/.bundle directory and remembered for future use.

Here’s the incantation that worked for me, for a once-off install:

gem install do_postgres – <br /> –with-pgsql-server-dir=/Applications/Postgres.app/Contents/MacOS <br /> –with-pgsql-server-include=/Applications/Postgres.app/Contents/MacOS/include/server

Aug 25, 2013

Homebrew is my go-to tool for non-App Store or prebuilt app installs on Mac, and has been for ages, just because I’ve found it easier to use than fink or macports. There’s been precious little trouble moving to mavericks, but Erlang is one item that didn’t install smoothly for me. The solution was to add a configure switch to specify a later version of OpenSSL that isn’t installed by default on Mavericks (on beta 3 at least). Right now, brew only supports extra configure switches through altering the brew formula, and I didn’t want to muck about with that.

With thanks to Steve Vinoski for some tips on the configuration of the Erlang source code build:

$ brew install openssl

will get a recent version of OpenSSL that Erlang likes. At the end of the install you’ll get a message

Caveats

This formula is keg-only: so it was not symlinked into /usr/local.

Mac OS X already provides this software and installing another version in

parallel can cause all kinds of trouble.

The OpenSSL provided by OS X is too old for some software.

Generally there are no consequences of this for you. If you build your

own software and it requires this formula, you’ll need to add to your

build variables:

LDFLAGS: -L/usr/local/opt/openssl/lib

CPPFLAGS: -I/usr/local/opt/openssl/include

The Erlang source build needs to know about that /usr/local/opt/openssl directory. Go download the Erlang source - if you are intending to install Elixir get the 16B01 version at least. Unpack the source, change into the source directory and issue the build configure like this (line breaks for legibility - paste-able version at this gist).

$ ./configure –disable-hipe

–enable-smp-support

–enable-threads

–enable-kernel-poll

–enable-darwin-64bit

–with-ssl=/usr/local/opt/openssl

Once you’ve configured the build, you might want to skip a couple of things - for example wxWindows and ODBC

$ touch lib/wx/SKIP lib/odbc/SKIP

$ make

$ sudo make install

Check that erl has found the crypto module ok

$ erl

Erlang R16B01 (erts-5.10.2) [source][/source] [64-bit] [smp:4:4] [async-threads:10] [kernel-poll:false]

Eshell V5.10.2 (abort with ^G)

1> crypto:start().

ok

2>

User switch command

–> q

Now for Elixir, it’s just a matter of going back to using homebrew and all should behave as expected.

$ brew install elixir

$ iex

Erlang R16B01 (erts-5.10.2) [source][/source] [64-bit] [smp:4:4] [async-threads:10] [kernel-poll:false]

Interactive Elixir (0.10.1) - press Ctrl+C to exit (type h() ENTER for help)

iex(1)> IO.puts(“Woot”)

Woot

:ok

iex(2)>

May 22, 2013

The closing stages of úll earlier this year featured two podcast recordings, live with the Unprofessional and The Talk Show guys. We were all in the audience, in the events room at Odessa, which happened to have a bar, and, well, we were all delighted, tired and emotional after a while, and in that fertile ground for promises and good ideas, the thought was thought - we should do this!

And, surprisingly, it happened, and you can subscribe to the first Verbose podcast. There's a twitter account @VerbosePodcast and an app.net account @VerbosePodcast for further firehosery.

The podcast itself is a rambling country garden of stuff, mostly themed around iOS and iOS development, but we pick up on other things too, like Google I/O and some of the nuisances of making software.

Yes, we will do better on the sound next time around! Sorry!

Dec 15, 2012

The Hobbit, in IMAX, was fantastic. Drain all bladders before taking your seat: there is something like 166 minutes of film, preceded by 30 minutes or more of trailer, ads, and the usually turgid mush about the mind-splittingly good technology that is going to make your experience unparalleled.

Before we get into a brief description of the thing, I'd like to note that the Hobbit probably represents the only new estate in big budget blockbusters - all the trailers here were for reboots: Superman (again), Wizard of Oz (a sequel), Star Trek (OMFG again).

This Hobbit screening was not only IMAX, it was 3D, which is generally of gimmicky quality. This, however, was very good - I couldn't suppress a flinch at an incoming spear during the Star Trek trailer, and there were times when it appeared you were looking through a hole in the wall of the cinema rather than at a screen. No doubt, the 48fps had something to do with this. Pan shots were very smooth and there definitely was a parallax-scrolling effect going on during the wide travel shots.

Top tips: Do not sit near the front. You will be too close to the screen and you won't be able to see the edges. Do not move your head around, the 3D effect appears to be delicate and even a moderate tilt will blur out parts of the picture. Do not bring excessively small humans, they have tiny internal waste storage spaces and may cause you to miss chunks of the movie when they need emptying. Do not bring a picky perfect recall of the book, loads of original material has been packed in to make a solid movie from what is really a short book constructed for the appreciation of young readers.

About twenty minutes from the end, the sound and picture streams de-synched at our showing, but by giving us free IMAX tickets for another show, the management of the cinema forestalled the impending torches and pitchforks mob.

Take the top tips above and go see it.

Feb 3, 2012

Dear @monkchips

Thank you for a exemplary conference experience at Monki Gras during a chilly and bright London January. You went deep on the craft theme and I think that resonated strongly with everyone there, because those people who were there loved and cared so much about what they do. That was the first thing that set it apart from ‘regular’ conferences.

Also there was beer, but not a surreptitious beer, not a beer that was pale and fizzy and gullished without pleasure by braying middle managers with grotesquely tumescent bellies and swollen man-boobs. This was a first-class beer that was knitted into the fabric of the conference and reflected the craft theme, that an attendee could openly savour in company in the noonday without fear of a judgement of functional alcoholism (to borrow a phrase from @jasonh). This was another welcome distancing from the mainstream, albeit I thought I had been mugged after checking my wallet post the Craft Beer Co. experience - though is the welcome price we pay for quality.

At this moment, in an echo of the event itself, I am writing this letter, drinking a craft pale ale in a craft beer pub that has newly opened across the road from my office, a whole 45 metres away from my desk. I feel that these echoes will continue to reverberate, perhaps to the detriment of my deadlines.

Let us return to the conference.

The food, made fresh in front of our eyes, the specially roasted coffee, the baskets of fucking fruit, all spoke to a care of consumption that really is usually ignored in favour of cost in our modern lives. Care and attention, @monkchips, care and attention to the smallest detail makes the picture whole and perfect.

In your carefully crafted environment, who could not be in a positive frame of mind, content and happy to be open and honest with their neighbor, even though they may be a temporary stranger? This brought the best out in the already well-qualified and excellent speakers, brought out the humour, brought out the honest expression normally present among a group of friends rather than between a conference speaker and conference attendees. I cannot speak for others - but forty minutes into the first day I was in the front row wishing I was up there giving a talk. What a crowd!

And your conference was filled with wonders - the .gov.uk affair was heart-stopping in its implications and amazing in the fact that it actually happened; - the data pretties entranced us and were in a second breath laid bare as shams if presented without context; - kittehs masqueraded, unchecked, as chikins; - the bombshell that companies get the UX they deserve was dropped; - machines with software that killed people were wheeled out; - the dysfunctions of technology executives were outed without ceremony; - the list could go on, if it weren’t for the line drawn under it by that 9.5 ABV beer, the name of which escapes me entirely.

This letter has just about reached its limit. Let me finish by mentioning that most important aspect of conference-going - personal relevance. I’m a technical co-founder, and my work life is trisected equally into states of euphoria, all-consuming flow and cold panic. At your conference, listening to the experiences and the judgements of those who have treaded this route before, I experienced an enormous feeling of validation - that I haven’t chosen the wrong technology stack; - that I’m not totally crazy for attempting to do what I am doing; - that these people have tried and succeeded and are not that different to me after all: and for that, especially that, I thank you and your team.

all the best

Oisin

p.s also - phone is teh awesome :)

Jan 22, 2012

There’s been some buzz about CoderDojo in the news recently. The CoderDojo was founded by James Whelton in 2011 to introduce kids to coding at an age when they are still in school, in an environment that supports learning. With support from Bill Liao, CoderDojos have sprung up in nine locations around Ireland.

This is relevant to my interests, and I wanted to see what the whole thing was about. To get in, you need a kid, so I brought the ten year old rugby fanatic that hangs around my house and eats the food. He chose the Programming Games with Python session - no doubt wanting to fulfill his dream of getting a decent rugby game on his iPod touch at some point.

Let me digress briefly on the subject of teaching kids how to manipulate computers with software code. We use physical tools to supplement our physical selves - pushing a nail into a piece of timber with your thumb is rarely successful. We need to use computational tools to supplement our own mental computing capabilities and allow us to enhance our reasoning processes. We are already equipped to pick up a hammer, see a nail and get a fair idea what to do next, but pick up an iPod Touch and put a rugby game on it? It’s not obvious how to get there, so instruction is required. And instruction needs to be imparted early, not because we want to make more developers, not because we want kids choosing a career in coding, but because we want to give them the tools they need in the 21st century to allow them to take advantage of every opportunity that comes their way.

Back to the dojo. Our host Eugene had his work cut out for him: 25 or more young humans, with vastly different capabilities in the area, short attention spans and different laptops all around. I decided take Mr. Rugby ahead with a copy of a simple Python text game involving choosing a cave and hoping the dragon therein doesn’t eat you, taken from the syllabus book.

The programming journey went like this - at each stage we thought of a goal and massaged the existing code and added new code to get to it. The kid did all the typing, saving, running, copying, pasting. I just pointed out now and then similar chunks of code that he could reuse and got him through any blocks.

-

I say - let’s extend the game from a choice between two caves, to a choice between three.

- Kid says - let’s print out which cave was which at the end of the game, so you can see which was the right one.

- We discover that strings and integers were different :)

- We discover that when one chooses three random numbers in the range 1..3, there’s a good chance two will be the same, and it doesn’t make sense to have a bad dragon and a good dragon in the one cave - they are territorial.

- Kid surprises me by coming up with a simple but effective way to make sure that the numbers wouldn’t clash, and we implement it - without using the modulo operator

Then we were done with where we wanted to go. Eugene was taking the kids along, helping each individually if he need to do so, and going down the same path initially, making an extra cave in the game.

But our local journey hadn’t finished after all. The kid had decided that now it was time to do some visuals and had sketched out a picture with three caves with numbers over them. Here’s where having a software savvy dad and the internet is a win: we grabbed a free pixel art program, muddled out how to use it, and while he drew the screen, I frantically researched free Python game libraries.

Aside - before we got onto the visuals, I spotted a few areas in the code to put in a couple of for loops, and suggested we ‘tidy up’ the code a bit so that I could show these and explain them. Why? was the response, the thing worked didn’t it? The important thing at this point is to shrug and agree - the lad is not a professional coder, he doesn’t need to refactor, he just needs results. In fact the whole thing needs to be results-driven. This is why you use a tool - not because it’s lovely or elegant or makes you feel special, but because you have a job to sort out. Then you put the tool down. It’s only when you get trained in how tools work can you start making your own tools. cf. lightsabre etc

Eventually I stumbled over pygame, which turned out to be just the tool we needed. We made a plan to write a small programme to try it out, and there was palpable excitement when our extremely amateurish drawing of caves popped up, surmounted by a hand-scrawled Danger!! sign.

On the way home on the train, we designed an rugby-themed 8-bit scroller for the iPod Touch and set up the kid’s github account. When we arrived home the kid insisted, with uncharacteristic determination, that we finish the Dragon game - adding keyboard control, putting in dragon graphics, conveying the result visually. Next challenge: the rugby game. Yikes!

Very little of this article has been on the details of the CoderDojo, but you may have already realized how the CoderDojo was instrumental in making this tiny but important success work. It provided us (and I write ‘us’ deliberately) a place in which learning was the norm; it provided a place where myself and himself were on the same team working towards an external goal; it provided a place that wasn’t dominated by adults. And that last one is the key one I think.

Many thanks to Eugene and the team behind CoderDojo Dublin for their commitment and patience in putting the series together, they are providing an really valuable service, on their own time.

Oct 21, 2011

I don't write much about the Company, what with me being a self-effacing developer type with a disinclination to hyperbole. But we have an interesting week ahead of us. There are a couple of things happening -- the first is about learning: the first module of the iGAP3 Program, subject matter being Strategy. There's even homework! Due today! And it's not done yet! I'm writing a blog entry instead -- classic displacement activity. Provided I don't have to wear the cone of shame, I'm looking forward to the session. The second thing is about exposure: we've entered Vigill in a number of these startup competitions, one of which was the ESB Ireland Spark of Genius, which is inextricably connected to the upcoming and massively hyped Dublin Web Summit.

As an aside, if you bump into me, don't ask me how and when and what we did -- if it's not a story on Trello or a ticket at Assembla or a strange MongoDB query or trying to do capacity analysis for cloud service pricing, it's difficult to get my attention these days. I'm merely the development angle here. Barry spins the visions and Helen hunts down and corrals the customers. Myself, more than ably assisted by Steve, has to make sure that the services don't break and that we can make good on our promises and that there's some kind of costing and schedule that makes sense.

[caption id="attachment_519" align="alignright" width="300" caption="The Brownies of Reward"]

[/caption]

Back to the competition. Last Friday we went out to a big lawyery place on St. Stephen's Green with nine other companies to pitch to the judges in the semi-final stage of the Spark of Genius. Sitting in the Green Room there was a noticeable lack of G+Ts, but plenty of coffee, which was no doubt fuelling the nervous tittering of the individuals that were there to show off in front of the panel in the boardroom.

The panel turned out to be a dozen people -- I still don't know who half of them were -- but we did our pitch, our multiply-practised 5 minute pitch, and then we toddled off, intercepted briefly by a posh lawyerly standup lunch and a bit of a hobnob with the great and the good.

Long story short, we just recently found out we had progressed to the final and there's a short article in the Irish Times with an overview of all finalists. I remember being told that one of the important goals when running a company is to keep the organization off the front page, but the technology section is good, apparently. Myself and Barry have lined the meeting room with whiteboards and are bursting our brains to try to come up with a good presentation for the final which is on Thursday 27th at 1515 on the main stage of the Dublin Web Summit. Follow the @_vigill tweetstream for more information as it comes in.

Jul 15, 2011

The good news today is that it looks like we’ve settled on an office in Capel St. as the place to grow the startup. You would think that getting space in Dublin would be easy - and yes, there is a load of office space available. You would think that you would pay a keen and competitive rate for it too, considering the depressed market, but on that point you would be very, very wrong.

We visited buildings that have lain vacant for two years and the lettors would refuse to lower their prices. This makes a sense in the most perverse way - the financial way, I mean - in that lowering the rental will automatically take value from the ‘apparent’ investment worth of the building itself. These guys are heavily delusional - and they are hurting startup businesses. A letter to Enterprise Ireland has been delivered by the usual suspects.

Back to the venue itself - we’re delighted that we are sub-letting a space from another technology company that are scaling up. I plan to pick those guys’ brains on all topics relating to making startups go.

Capel St is a fantastic street. There’s a mixed-use pub and music shop. There’s pet shops, pubs, camping stores, martial arts shops, adult naughty fun stores, Asian markets, Polish markets, Sari Sari stores, more pubs, chippers, more music shops, Asian restaurants and the highest concentration of Korean Barbecues I have ever had the privilege to see in one place.

Right around the corner is a large cinema, a gym, many second-hand book shops, an early-morning fruit market and 3fe, one of the best coffee shops in Dublin city.

If you go check it out on Google Maps, you will see the street as it was about three years ago, it’s changed a bit even in that short time. More restaurants, less headshops.

At this moment in time we are searching the skips and dumpsters of Dublin looking for furniture to put into this ex-Art Gallery space. Or maybe we are going to just visit Ikea, not sure yet. Once the furniture is in, I’ll be looking for developers to keep the seats warm :)

Jun 3, 2011

Google has come up with some kind of a like-a-like called +1. For those who may have not been part of an open source development community, ever, you need to know that this convention has been in place since the internet was a child, and Google’s arse was the size of a button. The full remit of of the taxonomy goes thusly

- +1 - I agree, and thanks for saving me the typing

- +0 - I don't really care, to be honest, but slight preference on it happening rather than not, i.e. I'm emotionally content to be part of the majority here.

- -0 - I've no argument one way or the other, but I dislike change, and if there's an issue I'll veto this bastard.

- -1 - THIS IS THE DUMBEST IDEA I HAVE EVER HEARD. I CANNOT BELIEVE THAT YOU HAVE THE BALLS TO BRING THIS UP WHEN YOU KNOW THAT EVERYONE THINKS IT IS STUPID. Divers alarums, lying down in the road and Godwin's Law ensue (exeunt omnes)

May 11, 2011

Update: Ok, speaker notes have turned up at this point! Also, @matthutchin did a bang-up job of editing some moody video and sound of the talk into this video+slides presentation!

Update: Still no sign of the speaker notes on Slideshare - here’s a bit of ruby to grab them from the slideshow and format them into an HTML page: https://gist.github.com/968161.

This the talk I gave at the

Ruby Ireland meetup last night. I think it went over well - there’s plenty of interest in the plethora of non-SQL style databases, but the problem is over population of choice and the amount of time investment required before you can make a properly informed decision. I think the best approach is to try to find as many testimonials from companies that have incorporated these newer technologies into their data storage arsenal, and read them thoroughly. These will epitomise the (fleeting) state of the art.

In an ideal world, there would be a group of volunteers running a funded lab that can create independent assessments of all the different approaches and products.

The slideshow can be found on Slideshare; Code for the sample application can be found on GitHub. Code pull requests welcome!

[slideshare id=7912053&doc=ruby-ireland-may-11-110510111623-phpapp02&w=400&h=334]

May 3, 2011

Versioning in APIs is vital if you want to control the lifecycle, rather than it controlling you. If you are using Sinatra to do routing for your Web API, then you can easily stuff all the version compatibility testing into one place - a filter - and not have to propagate it through all your routes. Follow the gist to see the code.

https://gist.github.com/987247

Apr 29, 2011

On May 10, I’ll be giving a talk on Constructing Web APIs with Rack, Sinatra and MongoDB at the monthly Ruby Ireland presentation gig. It will take place at Seagrass in Portobello, Dublin. Kickoff is at 7pm.

Full details, with maps, links and a rash promise of free food from Kevin Noonan, can be found in this posting to the Ruby Ireland group. You will need to sign up for the nosebag.

I aspire to having the talk slides up on Slideshare beforehand - this topic may be old hat to some of you hard-core Ruby types, but it’s relatively new and interesting to me, and may be so to others. In any case we’ll be looking at some new stuff, in the case of MongoDB and having some fun with it.

Bí ann nó bí chearnóg, mar a deirtear.

Hope to see you there.

Mar 21, 2011

EclipseCon 2011 started today, with an awesome program of events going on over the week. I’ve attended the previous four instances of the conference, and had the signal honour to have been the Program Chair for EclipseCon 2010. Now, alas, I’ve lapsed. Instead of getting a nice easy job after my release from Progress last year, I’ve foolishly decided to startup a software business with a couple of guys. I hope this adequately explains my somatic non-presence at this years showcase of what’s wonderful in the Eclipse world. For example - if I was in California today, I would not have been able to load the mobile part of our product on the phone of a friend-of-a-friend who just happens to be attending a concert with the CEO of a division of a large cellphone company, with instructions to show it to this CEO and get a meeting for us. But if I was in California today, I would be enjoying beers with lots of people that are much smarter and much more dedicated than me.

It’s always a tradeoff.

Now that I have a brand new ‘user’ perspective, and am on the outside looking in, there are a few items within the Eclipse Ecosystem that are particularly interesting.

- EGit - I’m using git exclusively now for source code control, and having good support is very important. I think that the real decision-maker on using git all the time are hosting services like Heroku and Nodester adopting git push workflows for application deployment.

- DSL-based mobile device project scaffolding - projects like Applause and Applitude, both based on Xtext and mobl, based on Spoofax, can give you a chunk of starting point code to get your mobile application up and running on the cheap. I haven’t taken as much time to study these as I want to - a future blog entry I think.

- Orion - when I first saw the Bespin project, which then merged with Ace and is now the development-as-a-service Cloud9 IDE, it failed to stir me. However, when I started doing some node.js project experiments, then the option of being able to edit your JS code through the browser suddenly had more appeal, precisely because now you can edit server code. It will be worth a blog entry in its own right at some point (aside - Mr. Orion, @bokowski, just linked to another one, Akshell, a minute ago)

I just realized the other day that the last piece of Java/Eclipse a programming I did was in July 2010. Since then I’ve been in this dual world of the mobile app developer - programming

native code on iOS devices, programming Rails 3 and node.js on the server end of things, pushing data into PostGIS and Redis. I didn’t think I would end up here, but it’s been fun so far :) As to the future, I’ve refused to plan anything beyond world domination for the moment. But maybe I can get an Orion talk accepted for EclipseCon Europe…